|

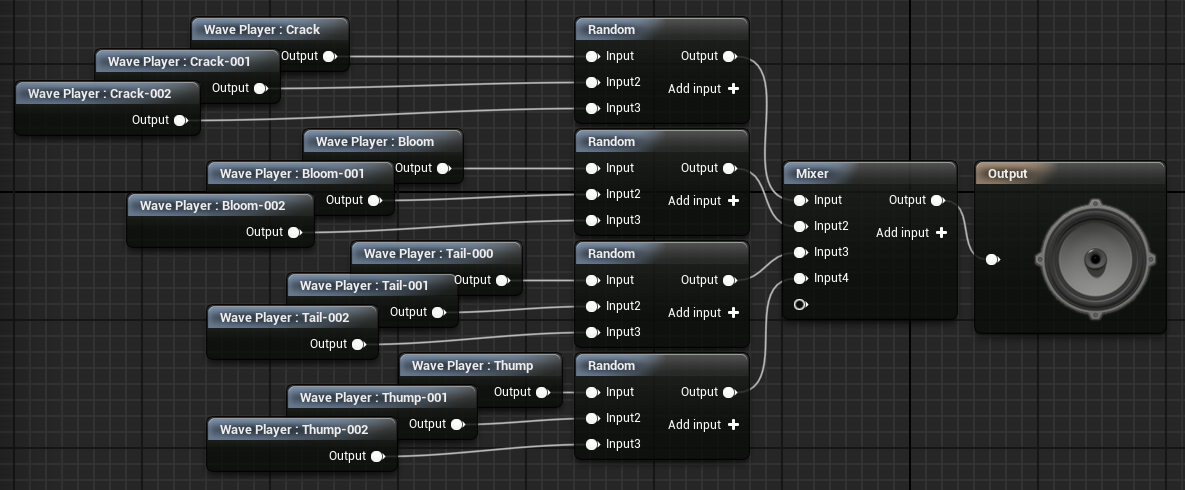

Real-time audio technology is powerful. Very powerful. Imagine being able to explore a digital world where the environment (where this article is concerned, sound!) is that realistic that we can't differentiate between the real world and the digital - i.e. the Matrix (but, perhaps, without the agents...). That is the power of real-time audio technology. The gaming industry, at the moment, is responsible for the commercial pioneering of interactive audio technologies. In building such audio technologies, they should mimic how we listen to our world, to make the digital world somewhat 'believable' and much like our own. This is done so that we directly relate to the game we are playing, or feel better immersed within it, and video games try and do this to their best ability (e.g. Grand Theft Auto). So what audio technologies are being used at the moment within video games and how far away is real-time audio technology? To date, the gaming industry uses a type of audio technology that mimics the behaviour of real-life sound through the use of computer algorithms. This is called procedural audio. Procedural audio allows the computer to instantly generate convincing music - or sound effects - that have been pre-recorded at source. Simply put, a procedural audio system self-generates its audio output (music or sound effects) by making clever use of previously recorded (the important point, here) sound files; I'll briefly explain the technical points of how this works, later. All in all, the most famous example of procedural audio is Hello Games' No Man's Sky. So, what is procedural audio and why was it created? How do we make gaming, or interactive art, more immersive? As briefly mentioned earlier, we simply mimic how we perceive real-life through our senses (sight and hearing - in the case of this article, the latter). Procedural audio was a step closer to achieving that, through allowing the computer to fool us in to thinking what we are hearing is unique every time we hear it (think of this in the context of an explosion sound effect). Technically, this is done via randomising the crucial components (ADSR) of a sound wave, using a finite number of sound files. For example: - To start, I have taken 5 recordings of an explosion - I then cut each explosion sound wave in terms of each component of an ADSR envelope (Attack, Decay, Sustain, Release) - I then import all cut components in to my game engine - I feed each cut component of a specific envelope (e.g. Attack) in to a randomisation mixer for each individual envelope (A, D, S, R); in this way, the engine will randomise, for example, which Attack of a sound wave (explosion) will be played every time I trigger it. - Finally, I feed each envelope randomisation mixer in to a main randomisation mixer for output - The output is then a randomly generated, convincing, sound - or explosion. This system would therefore create the potential for huge variation in what we are hearing through solely using a finite number of raw recordings - and it does a good job (see image below for an example of how it works in Unreal Engine 4). This method would therefore save strain on the CPU and would create a bullet-proof system of creating 'convincing' audio. But the sky is the limit and, naturally, we need more immersion. Procedural audio can only get us so far. The answer is real-time audio. What is Real-time audio? Real-time audio is the natural response and successor to procedural audio because it allows us to create better user-immersion (aurally) within video games. This is achieved through the instant generation of sound and, unlike procedural audio, through using a system that will generate an infinite number of sounds, within video games, in response to human interaction. Through being able to use an infinite and self-generative audio system we can therefore move closer to how we perceive real-world sounds, as the sounds we encounter in life are never finite or sound the same each time we perceive them. Think of the complexity of how we perceive sounds in real-life through the following question: 'If I place a glass down upon a table, will it always sound the same?' The answer is quite clearly 'no'. This is because there are so many real-world (physical) variables at play when we hear the glass being placed, e.g.:

All of these elements would affect our aural perception of sound - something which procedural audio systems do not have the capability to consider. Real-time audio technology, however, does. Therefore, real-time audio's mission is to create a convincing and sophisticated system that will allow us to hear digital worlds that feel and behave just like our own. Then, and only then, will we be able to feel more immersed within digital worlds. So, how do we achieve real-time audio? The process of creating real-time audio systems is currently undergoing research and is not quite as simple as procedural audio systems implementation for a number of reasons. Mainly, the potential strain real-time audio systems would have on CPU power and, perhaps more importantly, aesthetic considerations; i.e. how do we create convincing and aesthetically pleasing audio via computer generation and, ultimately, artificial intelligence? Can computers be artfully intelligent? Watch this space for a better detailed discussion these questions and the technical nature of real-time audio mechanics.

So what can we achieve from real-time audio within video games? Simply put, the potential for better and total immersion. We have always been obsessed with escapism, visiting or feeling a part of another world or space (see image below for an example of this in popular culture) and real-time audio technology is our next step in achieving that. Thanks for reading. Remember follow this blog for more discussion on interactive audio technology!

0 Comments

Leave a Reply. |

Chris RhodesMusician and Sound Technologist attempting to find new music in an old world. Archives

October 2022

Categories |

|

Proud to have supported:

Latest Blog:"What can we achieve from real-time audio within video games?" |

ContactEmail - crhodes1992 [at] hotmail [dot] co [dot] uk

|

RSS Feed

RSS Feed